GPT-5 one week later: it’s the system

Much of the grumbling about GPT-5 misses the most important upgrade

The headlines around GPT-5 weren’t exactly what OpenAI was looking for when it launched GPT-5. The Financial Times asked, “Is AI hitting a wall?” Similar questions from The Washington Post. The Verge said GPT-5 “failed the hype test,” then sat down with OpenAI CEO Sam Altman over dinner to discuss, in the publication’s words, the “GPT-5 launch fiasco.”

CEOs don’t typically have dinner-views with reporters unless they’re A. trying to change the narrative or B. well, trying to change the narrative.

While I had some quibbles, my review of GPT=5, which focused on the use cases for writers and content teams, was generally positive. So what happened?

System thinking

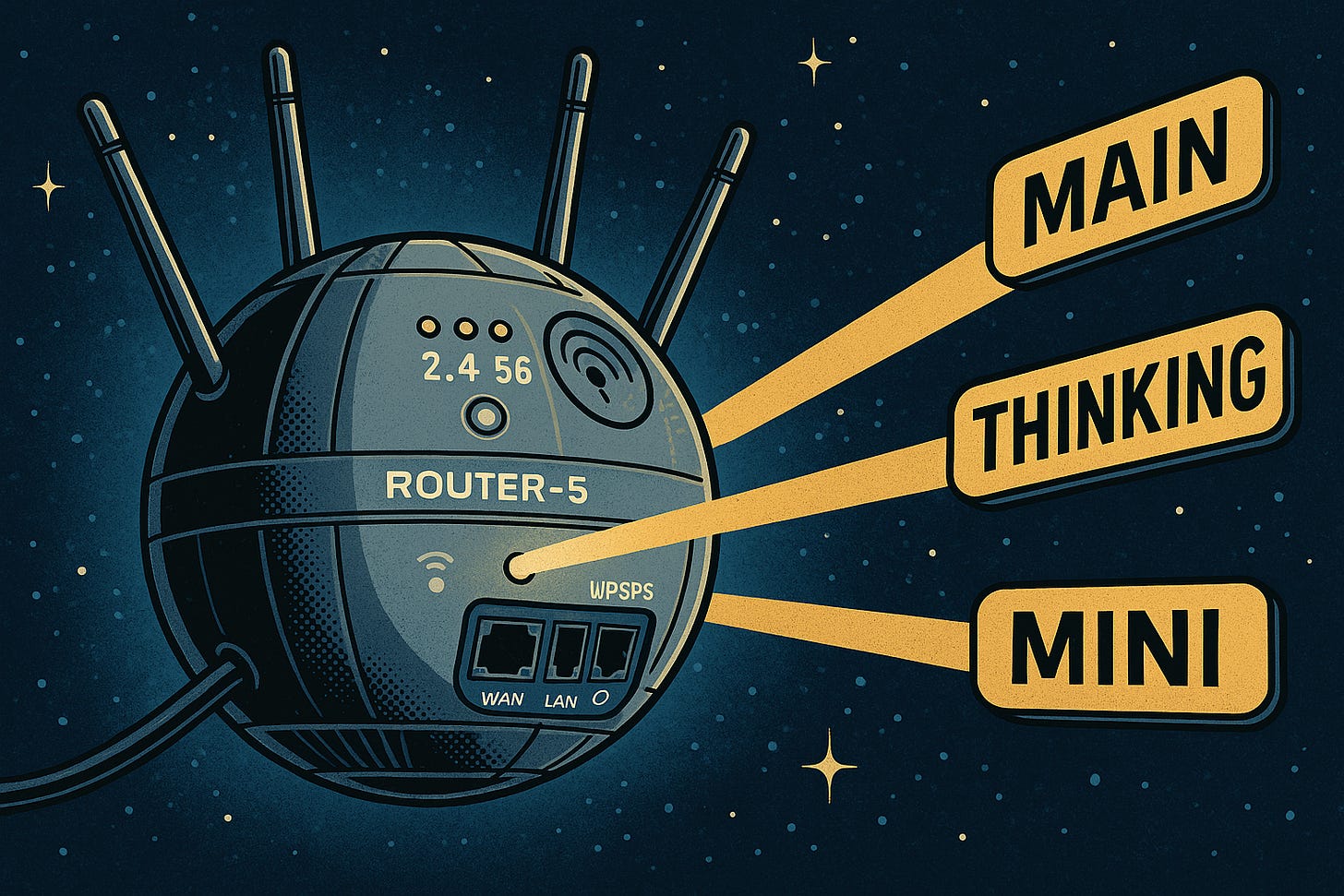

Many people have overlooked that GPT-5 is less a single model than a system. Based on the conversation and intent, a real-time router decides which model should answer—fast “main,” deeper “thinking,” or smaller variants. That’s a critical change in how ChatGPT works, and it matters for both usability and cost, as simpler queries can be routed to cheaper models.

One of the most controversial changes, which deserved pushback, was the decision to remove the older models. This broke critical workflows and disrupted power users who wanted the flexibility to use different models depending on the task. Fortunately, OpenAI reversed this decision, with the older models returning rather quickly.

But manual model switching is no way to function at scale. Operationalizing this new system is likely the goal, and one that will have significant benefits over the long term.

The super router era

What’s also been overlooked is the big advancement in how GPT-5 works. GPT-5 debuts a new layer of intelligence that reduces time and costs by functioning like a router that shifts the requests to the right model. The company is in a battle for enterprise adoption with Anthropic and Google; such moves are essential. But for the user, fewer model selections can also be freeing. You have to ignore the chatter and find what system works best for you.

Let’s also discuss the dreaded hallucinations. OpenAI’s system card indicates that the mainstream model produced 44% fewer answers with at least one significant factual error than GPT‑4o. By contrast, the deeper reasoning variant produced 78% fewer than o3. It’s tangible progress on one of LLMs’ most challenging issues.

I still encounter hallucinations, and social networks were filled with examples after GPT-5 launched. But the data tells a better story. I expect hallucinations to drop over time, and the models will continue getting faster. Today’s floor will be tomorrow’s ceiling.

Image struggles

If you were hoping that those awkward misspellings and design weirdness would disappear, prepare to be disappointed. Such observations are purely anecdotal, but I’ve had a worse experience with design since using GPT-5.

Misspellings are still a big problem, but in my testing, GPT-5 also struggled to add an icon to a logo.

Image generation is still a party trick for me. But this is an area I see a long way to go before replacing design skills or a design team. However, with Canva integrations, GPT-5 must improve in this area.

Welcome to the writer’s room

In the last week, I’ve been comparing GPT-5 Thinking with o3 to get a sense of whether the older model was actually better, as many Redditors have argued.

In short, GPT-5 is still better at going in-depth and doing more things than o3.

I fed the following prompt to both models: “Create a writer’s room to help with my writing. Create a term of personalities and perspectives that I can dialogue with who will debate my ideas, research, fact-check my writing, and assist in promotion.”

Both performed admirably at creating a room of experts to work with my writing. GPT-5 put some more thinking into the names, which were awkwardly titled in the o3 version. GPT-5 is also more of a doer. It scaffolded a strategy for summoning the members of the room, set parameters, and built a repeatable process for using this virtual team.

This experience is, of course, one anecdote. Knowing how LLMs work, we could run this prompt hundreds of times and get slightly different answers. Instead, I offer this as one example of where I am finding that GPT-5 goes the extra mile and is more thoughtful than previous models.

Also, whenever possible, I prefer the Thinking model to get answers with more depth and suggestions for where to push the work forward. For those who can fit the Plus model within their budget or get it through their employer, using Thinking or Thinking Mini is the right way to test all the possibilities when it comes to challenging and analyzing your writing or employing its research capabilities for your topics.

You are the solution

GPT-5 is powerful when employed as a writer’s analyst, editor, fact checker, and researcher (among the many personas of my writer’s room). If anything, this new model reaffirms humans' essential role in the AI loop.

The big leap is architectural. A system that routes, reasons when needed, and reduces error rates is a change worth celebrating. Humans will still set standards, context, and taste; the system just gets you there faster.