Google Pixel 10: the first AI-native smartphone?

The Pixel 10 launch is about building an ambient system that wows users with new AI smarts.

The smartphone is everyone’s most personal computer, which makes it the most consequential way for AI to prove itself. Google’s Pixel 10 launch on Wednesday, August 20, is a test of whether AI can truly feel native on a phone: fast, impressive, and secure.

The company already teased a camera coaching feature, in which Gemini will help you get a better picture than your untrained tendencies would allow.

Next, imagine talking directly to your phone after getting the shot to edit it to perfection. Less hunting for features and tapping menu options.

With AI already deeply integrated into the phone through its Gemini Nano model, which can do AI work without an Internet connection, we can see an AI future that goes beyond rewriting emails or softening a harsh text.

The Decision Phone

Mobile is a critical implementation layer for AI. Just as people need to see the benefit and be “wowed” to start using AI at work, they need similar inspiration to trust it on their phones.

Google has the right framework: its Gemini Nano can do AI on the device without an Internet connection, so you still get AI smarts even without a signal.

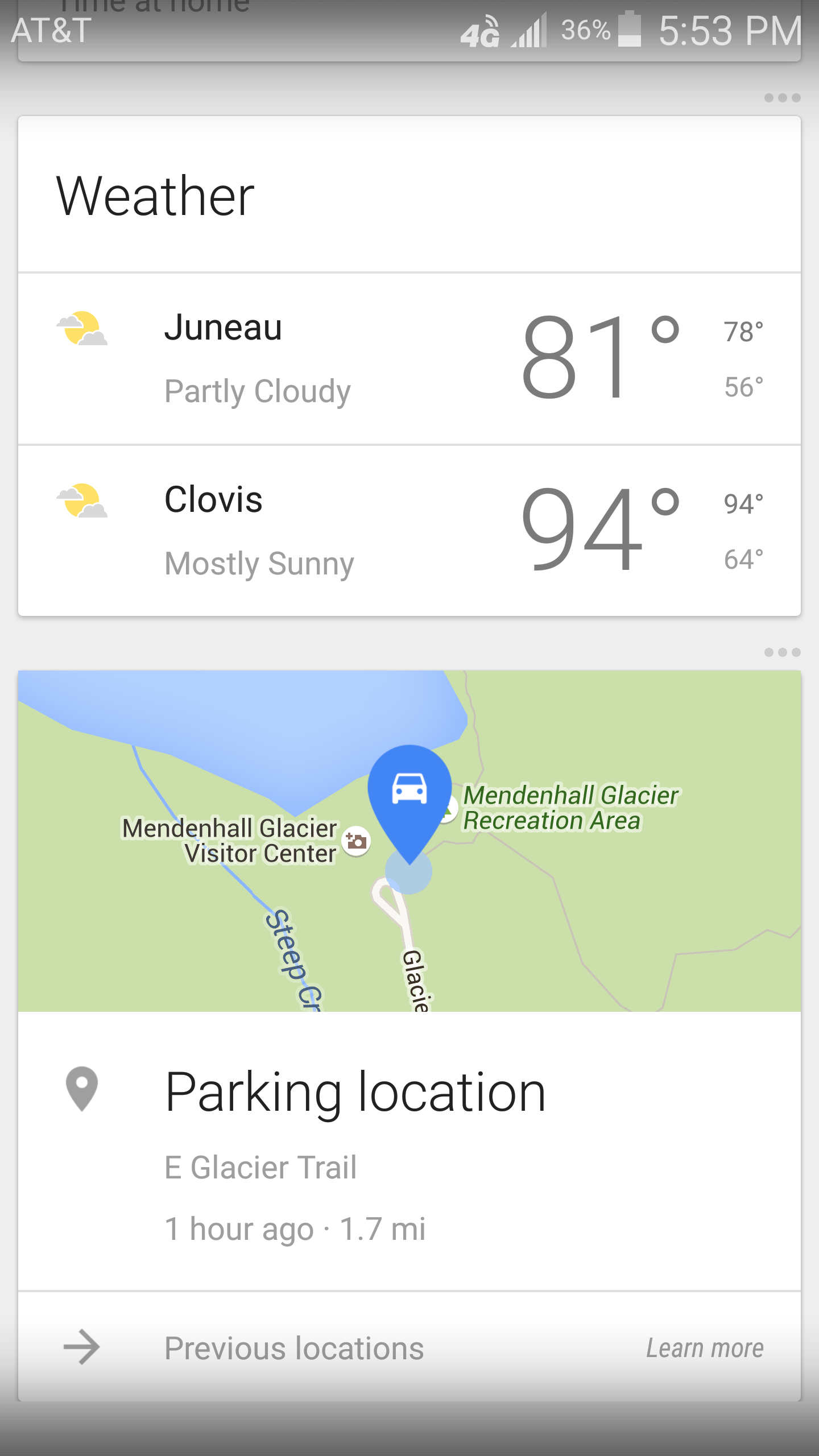

An AI-native phone must efficiently run on-device for speed and privacy while going to the cloud for those harder asks. It also needs to be really smart—think of proactively reminding you of appointments, birthdays, and where you parked your car. The smartphone experience is still hampered by hopping between apps and manually adding such tasks. Or such reminders get lost amidst a sea of notifications that drown out the signal.

A more intelligent assistant that cuts out the nonsense and surfaces what matters is where the Pixel 10 could shine.

A contrast in philosophies

Apple Intelligence on the iPhone is cautious. In keeping with its privacy-first approach, implementation is incremental and tightly controlled. That’s good for users, but it doesn't usher anyone into a better experience with AI.

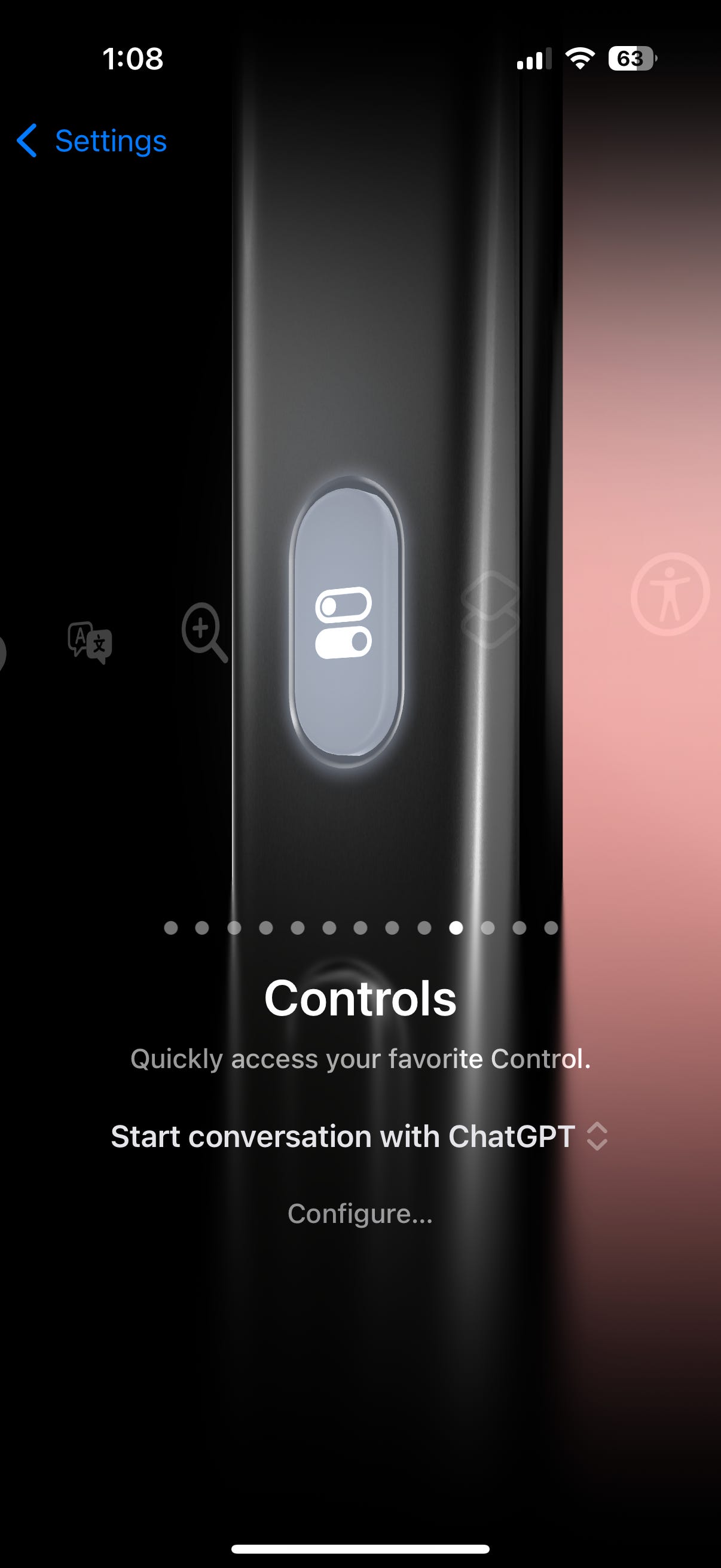

For example, I use AI on my iPhone by mapping the Action Button to ChatGPT. I bypass most Apple Intelligence features as they’re incremental.

The iPhone is one of the stickiest products of all time. Users won’t abandon their phone in droves for Google’s Pixel (blue bubble lock-in is real). What the Pixel launch should do is jolt Apple out of complacency. Along with the camera features, Google is rumored to bring a feature called Gemini Space that surfaces upcoming information you need in real-time to your device.

It would inherit the long-lost Google Now, which pulled in airline travel details or calendar updates into a real-time feed. Although its features have been absorbed into the Google Assistant, it has never felt quite the same.

Android has shone as a more ambient and proactive implementation of AI. I’ll be watching (and hoping) for that at the launch event.

Building trust in AI at a personal level

Trust is the foundation of one’s AI experience. What Google must get right is an implementation of Gemini that not only eases use and saves you time, but also brings a sufficient “wow” factor. You can already have Gemini find an email or add multiple events to a calendar with a quick voice command.

Apple has staked its case on privacy, to significant effect. Google’s opportunity is to deliver a new level of usefulness with Gemini. AI adoption requires simple, tangible features with easy wins.

ChatGPT has 800 million active weekly users because it created an AI that anyone could use. Of course, the number of people building agents or workflows is much smaller—the learning curve requires specialization. Using Gemini should feel like the former, and once again, it should deliver real innovation that always puts a new form of intelligence on your side.